Beyond the AI Stack: The Real Work Behind Successful Enterprise AI Agents

The technology works. So why do 95% of AI projects fail to deliver?

Table of contents

It has never been easier to build an AI application. A prototype that took six months two years ago can now be stood up in a week. The tools are better, the models are more capable, and the barrier to entry has never been lower. And yet 95% of Gen AI projects fail to deliver measurable business impact.

MIT's "The GenAI Divide: State of AI in Business 2025" report based on 150 interviews with business leaders, a survey of 350 employees, and analysis of 300 public deployments found that only 5% of generative AI pilots achieved rapid revenue acceleration. The rest stalled, delivering little to no measurable impact on P&L.

The technology works and the models are capable, so what's going wrong?

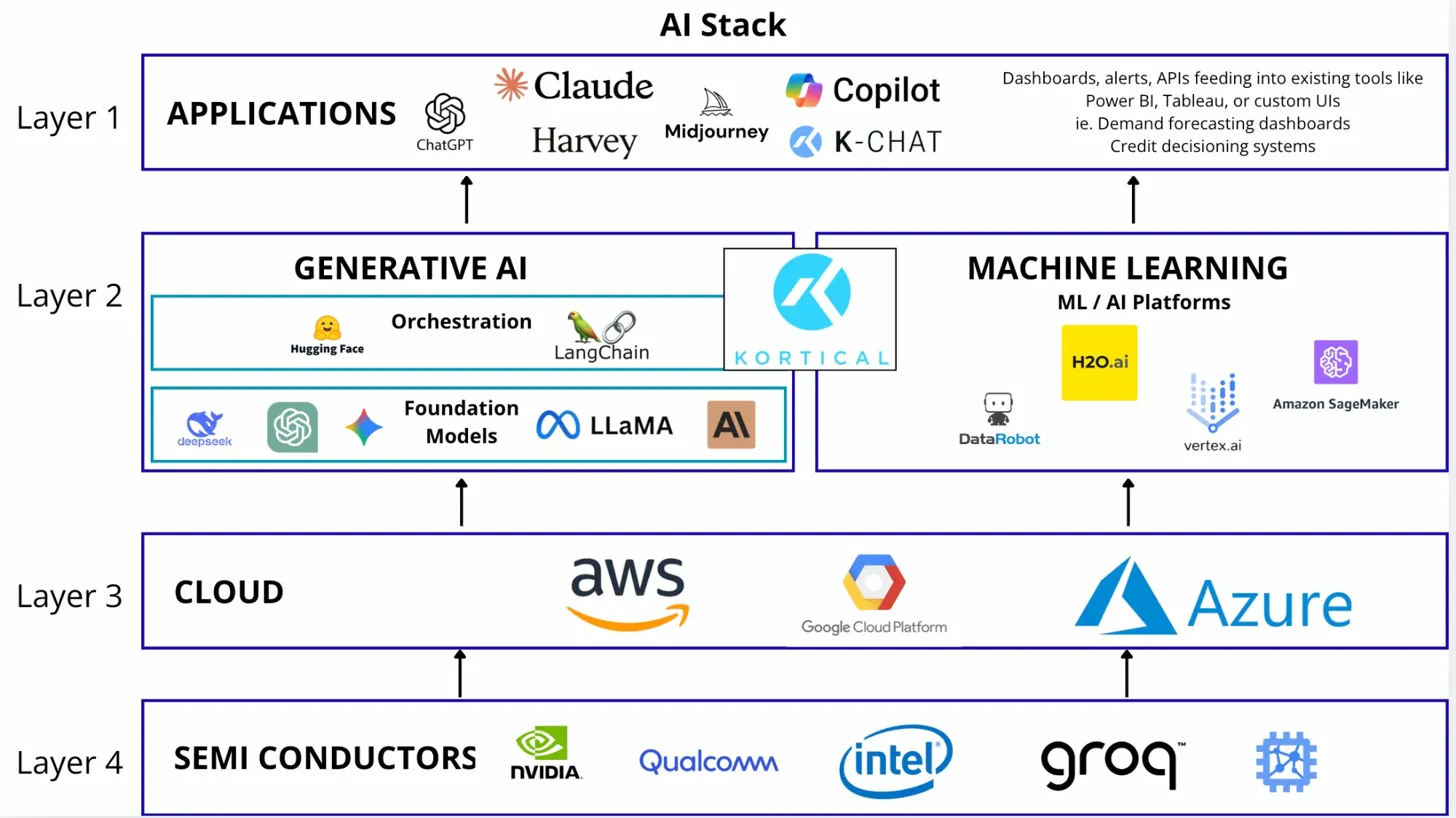

What is the AI Agent Stack (quick primer)

If you're not familiar with the AI stack, here's the short version. It's the set of technology layers that power AI applications: semiconductors, cloud infrastructure, orchestration, foundation models and applications.

The stack has two paths that both end at applications:

- Generative AI path: You use pre-trained foundation models and orchestrate them into agents for things like customer support, knowledge retrieval, or content generation.

- Machine Learning path: You build custom models from your own data for things like credit scoring, demand forecasting, or predictive maintenance.

Both paths share the same foundation (semiconductors and cloud) and converge at the same destination: applications.

Value is created at the application layer. The layers below only succeed if the applications built on top generate enough revenue to pay for them.

Most companies understand this. They pick their tools, assemble their stack, and start building.

And then they get stuck.

The Paradox: Easier to Build, Harder to Ship

Here's what the AI stack diagram doesn't show you: the organisational, operational, and strategic work that determines whether a project actually delivers value.

When projects fail, it's rarely because the model doesn't work. It's because of everything around the model.

1. Use Case Selection

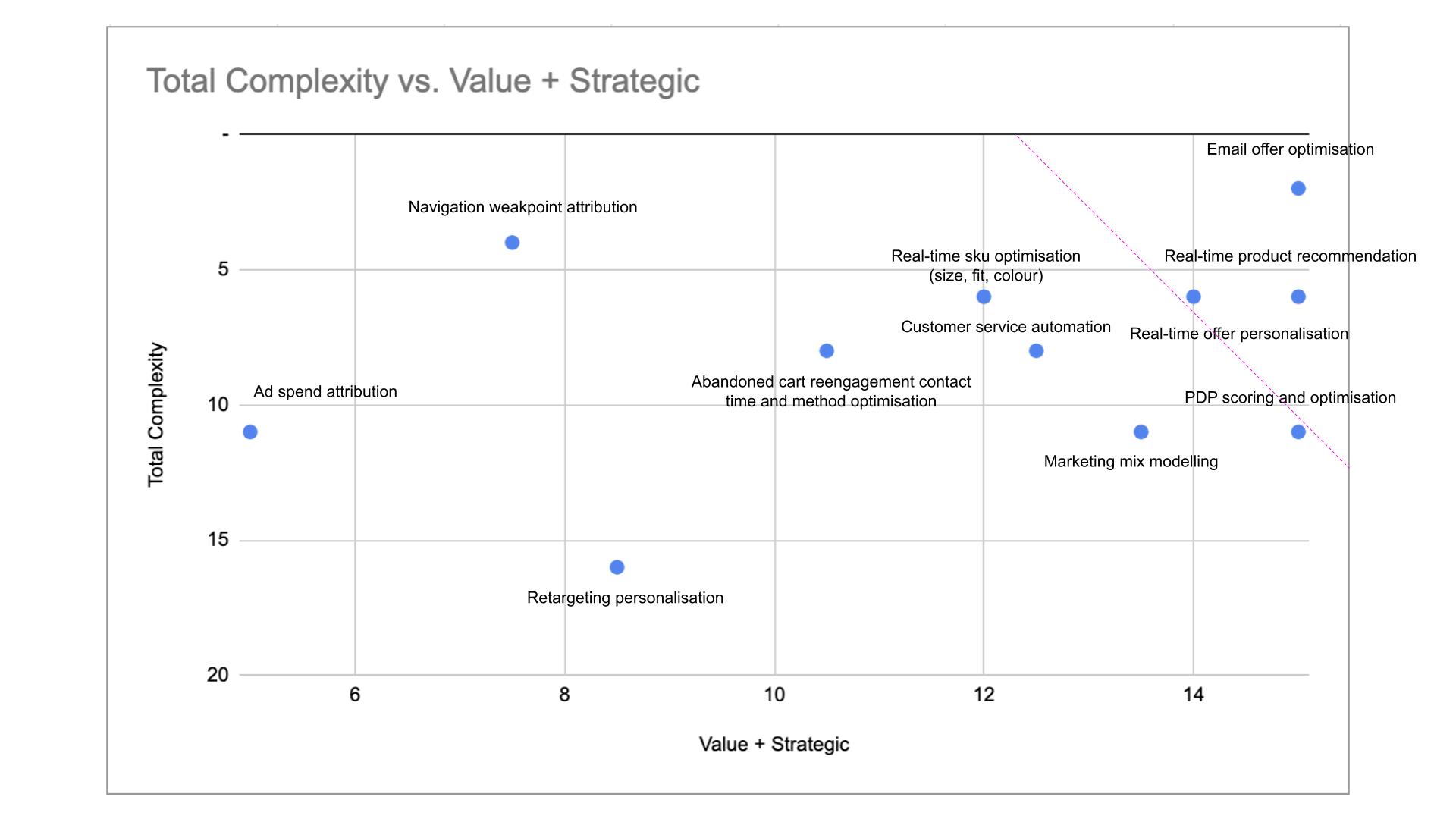

Most AI projects fail before they start by picking the wrong problem. Teams chase what's technically interesting rather than what's commercially valuable. They build proofs of concept that impress in demos but don't map to a real workflow or business outcome.

The right use case has clear ROI, available data, a defined user, and a realistic path to production. Getting this wrong means months of work on something that will never ship.

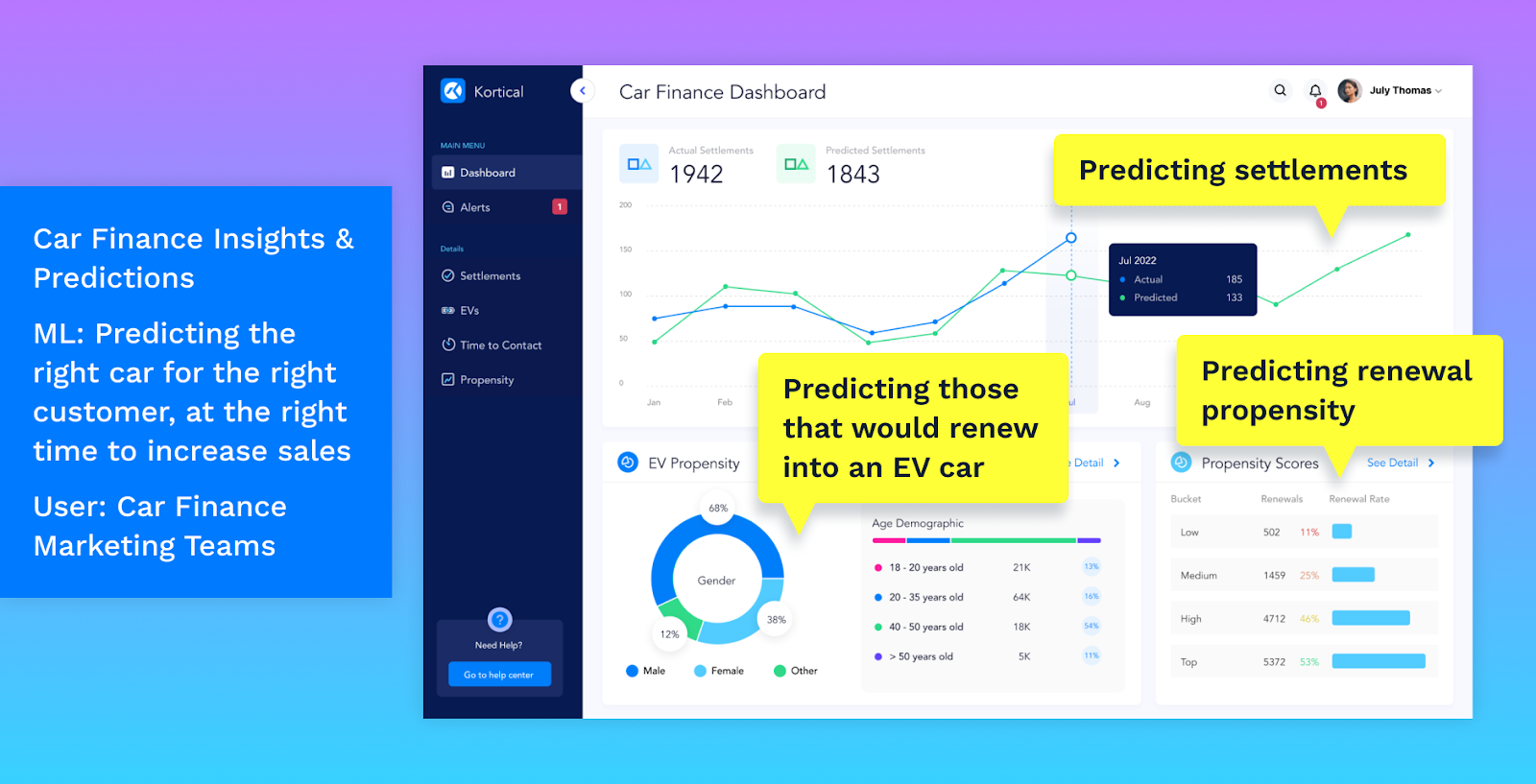

At Kortical, we use an AI roadmapping framework to help clients identify what we call the "lighthouse" use case. This is the starting point with the highest likelihood of success and the most visible impact. By scoring potential use cases on business value, strategic alignment, solution complexity, and data complexity, you can map them visually and find the sweet spot: high value, low complexity.

See the full framework: Build better AI solutions with an AI roadmap

2. Data Readiness

AI runs on data. But most enterprise data is fragmented, inconsistent, undocumented, or locked in systems that don't talk to each other. Teams underestimate the work required to get data into a usable state and they underestimate how much domain expertise is needed to understand what the data actually means.

One of the biggest blockers we see is that the data exists, but you can't get access to it. Or the data is collected, but the way it's structured isn't suitable for AI. We've seen projects fail simply because teams determined the use case first without checking what data was actually available.

A data readiness assessment before you build anything saves months of rework later. This means bringing together people who understand where the data lives, what format it's in, who owns it, and what it will take to access it ideally as part of the roadmapping process, not after a use case has already been locked in.

This is why our AI roadmapping sessions always include a data expert alongside the domain expert, decision-maker, and AI applications expert, someone who can bridge business context with deep technical knowledge to spot what's actually achievable.

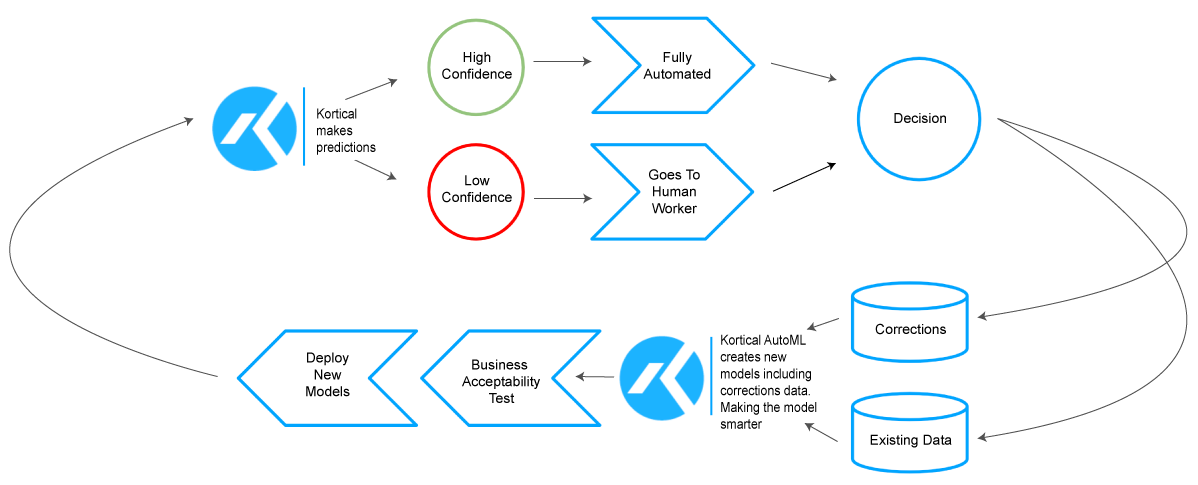

3. Human-in-the-Loop Design

AI rarely replaces a workflow entirely. It augments it. That means designing how humans and AI work together: where does the AI make decisions autonomously? Where does it surface recommendations for a human to approve? What happens when the AI is wrong?

Get this wrong and you either over-automate (creating risk) or under-automate (creating no value). The interface between human and AI is a design problem, not a technology problem.

A well-designed human-in-the-loop system looks like this in practice: Kortical's Deloitte Tax Automation solution is fully automated and self-learning, yet every model update goes through reproducibility testing and business-defined acceptability checks before deployment.

The cycle works as follows:

- AI makes predictions

- High-confidence predictions are fully automated; low-confidence predictions go to a human worker

- Human corrections are fed back into the system

- Kortical AutoML creates new models incorporating the corrections data

- Models pass business-defined acceptability tests before deployment

- Updated models deploy and the cycle continues

The result: the AI gets smarter over time, but humans stay in control of what gets deployed.

4. Change Management

AI changes how people work and that's often a good thing, but it requires deliberate management. Employees may initially have questions about job security, or feel uncertain about outputs they don't yet understand. The organisations that navigate this best treat change management as a core part of the project, not an afterthought.

Successful AI deployments invest in training, communication, and early involvement. When end users help shape the solution and understand how it reaches its conclusions, adoption follows naturally. The technology is rarely the barrier — building confidence and capability in the people using it is what makes the difference.

5. Compliance and Governance

In regulated industries like financial services, healthcare, insurance, AI needs to be explainable, auditable, and compliant. That means model documentation, bias testing, audit trails, and clear accountability for decisions.

The good news is that compliance is entirely achievable when it's built into the project from the start. Teams that embed explainability and governance requirements into their AI design process end up with more robust, deployable solutions and a faster path to sign-off.

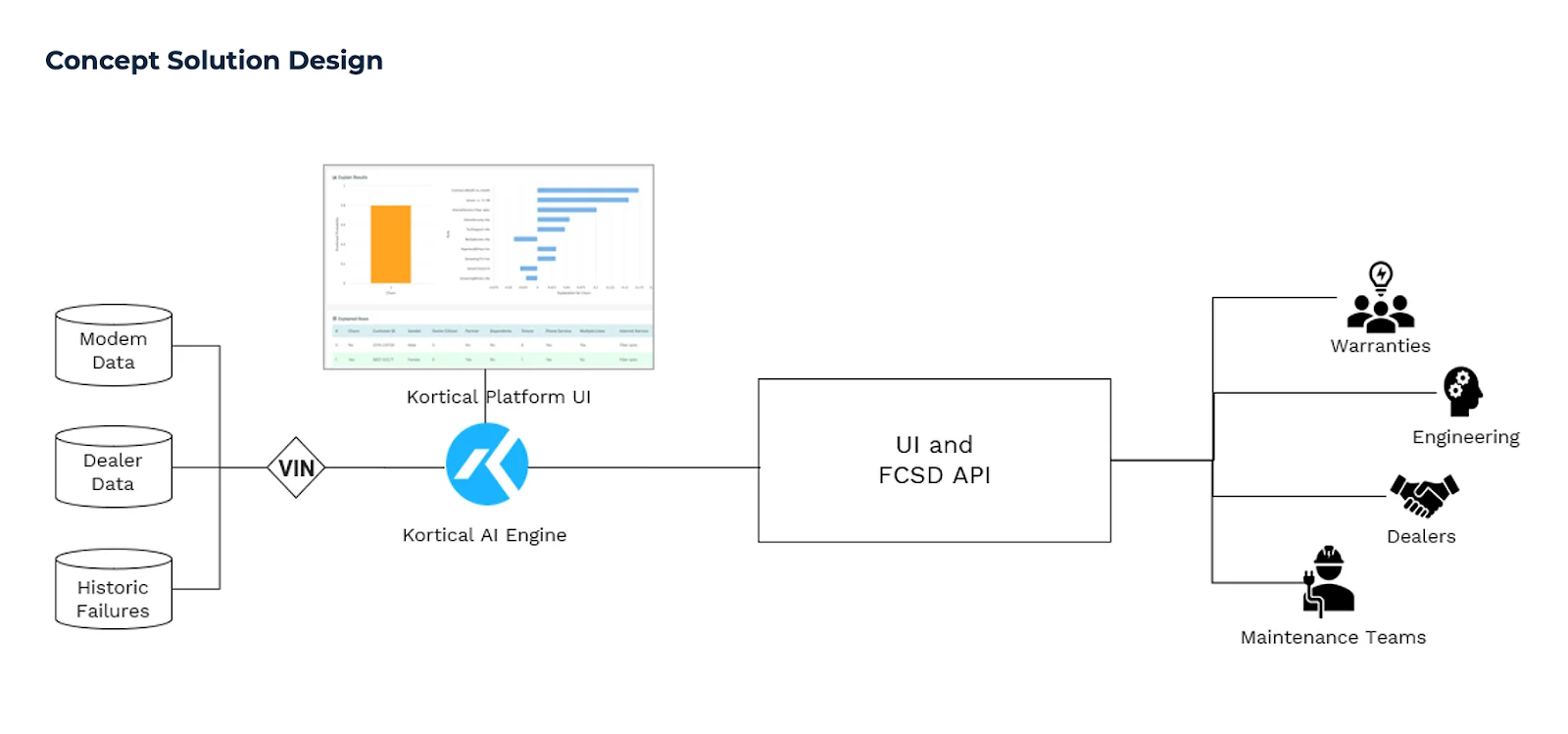

6. Integration with Existing Systems

AI doesn't operate in isolation. It needs to connect to CRMs, ERPs, data warehouses, customer service platforms, and legacy systems that weren't designed for real-time AI inference.

Integration is often the longest and most expensive part of an AI project. It requires understanding not just APIs, but business processes, data flows, and organisational politics.

Here's an example from our work with Ford. The predictive maintenance system pulls data from multiple sources (modem data, dealer data, and historic failures), processes it through the Kortical AI Engine, and delivers predictions via API to four different business functions: Warranties, Engineering, Dealers, and Maintenance Teams. Each of those teams has different needs and different systems, and the AI has to plug into all of them.

7. Ongoing Monitoring and Optimisation

AI systems degrade over time. Data distributions shift. User behaviour changes. Models that performed well at launch start making worse predictions.

Production AI requires monitoring, alerting, retraining pipelines, and a team that knows when to intervene. Shipping is not the finish line, it's the starting point.

Why Internal Builds Struggle

Look at the AI stack diagram and you'll see dozens of tools you can use: LangChain, LlamaIndex, SageMaker, Vertex AI, and more. An internal team could theoretically stitch these together.

But stitching together tools doesn't solve the problems above. In fact, it often makes them worse. Internal teams end up spending all their time on tooling and none on use case validation, change management, or compliance. They build impressive prototypes that never make it to production.

The MIT report found that purchasing AI tools from specialised vendors and building partnerships succeeded roughly 67% of the time, while internal builds succeeded only a third as often.

The gap isn't capability. It's focus. Internal teams are generalists trying to solve a specialist problem.

Kortical: Built to Beat the 95%

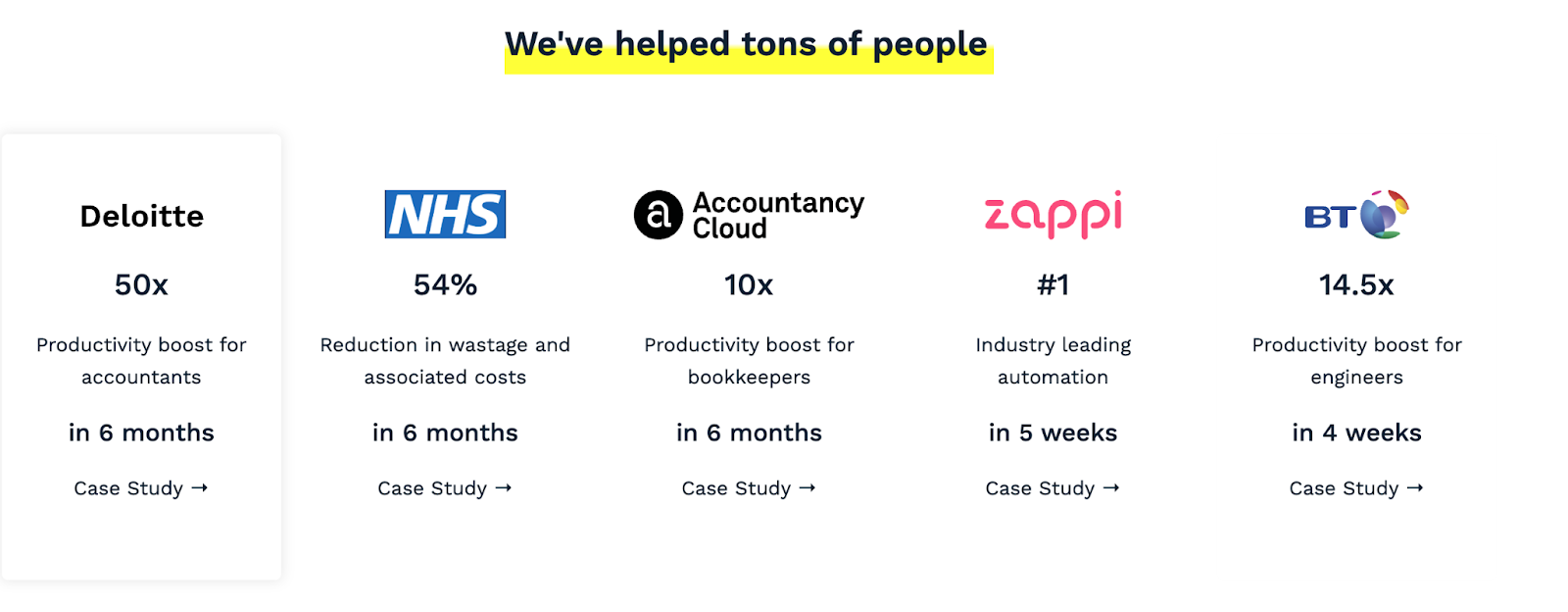

Kortical is an AI delivery partner that combines proprietary technology with hands-on expertise to get AI into production inside enterprises, typically in 5 weeks to 6 months rather than the 2 years most enterprises take.

We've been building AI for enterprises since 2016. Our clients include Santander, Ford, Charlotte Tilbury, and Deloitte. In 2024 alone, our systems powered over 1 billion predictions, 80 million recommendations, and 2 million conversations all live in production.

Gartner data shows most companies take two years to move from pilot to production. Our customers typically do it in five weeks to six months.

That speed comes from genuine depth in AI. After nearly a decade of delivering AI in production, we understand the tooling, we understand the problems, and when existing tools don't get the job done, we build our own. We have a patented AI platform for machine learning that handles data exploration, model building, explainability, deployment, and full audit trails, and an agentic AI framework that orchestrates multi-step reasoning, tool usage, memory, and workflows to deliver AI agents with guardrails, integrations, and cost optimisation built in from day one.

But the technology is only part of it. We also bring:

- Use case workshops to identify high-ROI opportunities before any code is written

- Data readiness assessments to surface gaps early

- Human-in-the-loop design that balances automation with appropriate oversight

- Change management support to drive adoption

- Compliance and governance built into our platform — explainability, audit trails, bias testing

- Deep integration expertise across enterprise systems

- Ongoing monitoring and optimisation so models keep performing after launch

We're not just a consultancy and we're also not just a self-serve platform. We’re both. We're the team that gets AI working inside your business and keeps it working, with enterprise-grade reliability and 99.9% uptime.

Closing Thoughts

The biggest wins in AI won't come from better models or more sophisticated tooling. They'll come from teams who understand that the stack is the easy part — and who invest in the use case selection, data readiness, change management, and operational rigour that actually determine success.

The companies that figure this out first won't just adopt AI. They'll pull ahead of competitors who are still stuck in pilot mode.

That's the opportunity. And it's wide open.

If you're exploring how to apply AI in your business and looking for a partner who can actually deliver it into production, we'd love to hear from you. Get in touch.

FAQs

What Are Semiconductors in AI?

AI requires enormous processing power. Building an AI model means processing vast amounts of data, and every time someone uses that model, a chip has to process the request and return an answer in seconds.

Normal computer chips aren't fast enough for this. That's why companies like NVIDIA design chips built specifically for AI. It's the foundation everything else runs on.

Examples:

- NVIDIA – GPUs (H100, A100) powering most AI training and inference

- AMD – MI300 accelerators

- Google – TPUs (custom AI chips)

- Apple – Neural Engine (on-device AI)

- Groq — LPUs built for fast inference

What Is Cloud Infrastructure in AI?

Cloud infrastructure is the computing power, storage, and networking that AI runs on. These companies buy chips (often NVIDIA's), manage massive data centres, and rent compute to everyone else.

Examples:

- AWS

- Google Cloud

- Microsoft Azure

Generative AI track

What Are Foundation Models?

Foundation models are large, pre-trained AI models that can be used for many different tasks. You can't use a model directly. It needs to be wrapped in an application to be useful. For example, GPT-4 is the model; ChatGPT is the application that lets you use it.

Examples:

- OpenAI – GPT-4, GPT-4o

- Anthropic – Claude

- Google – Gemini

- Meta – LLaMA (open-source)

- Mistral – Mistral models (open / commercial)

What Is Orchestration in AI?

Orchestration is the layer that coordinates how AI agents work. It manages multi-step reasoning, tool usage, memory, and workflows.

Examples:

- LangChain

- LlamaIndex

- OpenAI Assistants / Agents

- Kortical AI Agent Framework

- CrewAI

- AutoGen

What Are ML Platforms?

ML platforms are AI tooling solutions for building, training, and deploying machine learning models. They let you take your own data and create models for specific business problems like credit decisioning, predictive maintenance, demand forecasting, document classification, and churn prediction.

Think of it this way: foundation models like GPT-4 are pre-built by someone else. ML platforms let you build your own models, trained on your own data, for your specific use case.

Examples:

- Kortical AI Platform

- AWS SageMaker

- Google Vertex AI

- H2O.ai

- DataRobot

What Is the Application Layer in AI?

The application layer is where AI becomes a product that users interact with and pay for. It combines the model with your data, business logic, user experience, and guardrails to solve a specific problem.

LLM applications:

- ChatGPT

- Claude.ai

- Microsoft Copilot

- Midjourney (image generation)

- Harvey AI (AI for Legal)

- K-Chat (customer support AI agent for Shopify stores)

ML applications:

- Credit decisioning dashboards

- Predictive maintenance alerts

- Document classification systems

- Demand forecasting in Power BI/Tableau

Whether it's a chatbot or a dashboard with predictions, if a user interacts with it, it's the application layer.

How Do I Get Started with an AI Agent?

The best starting point is a conversation about the problem you're trying to solve. AI Agents can be applied across a wide range of use cases, from automating repetitive workflows to handling complex, multi-step decision-making but the right approach depends on your data, your systems, and your goals.

Get in touch with Kortical and we'll walk you through our process: from scoping the opportunity through to building and deploying a solution that works in your environment.

Ready to automate real work?

Contact us to see a focused demo and explore the quickest path to production.

Thank you!

A Kortical team member will be in touch shortly