Machine Learning

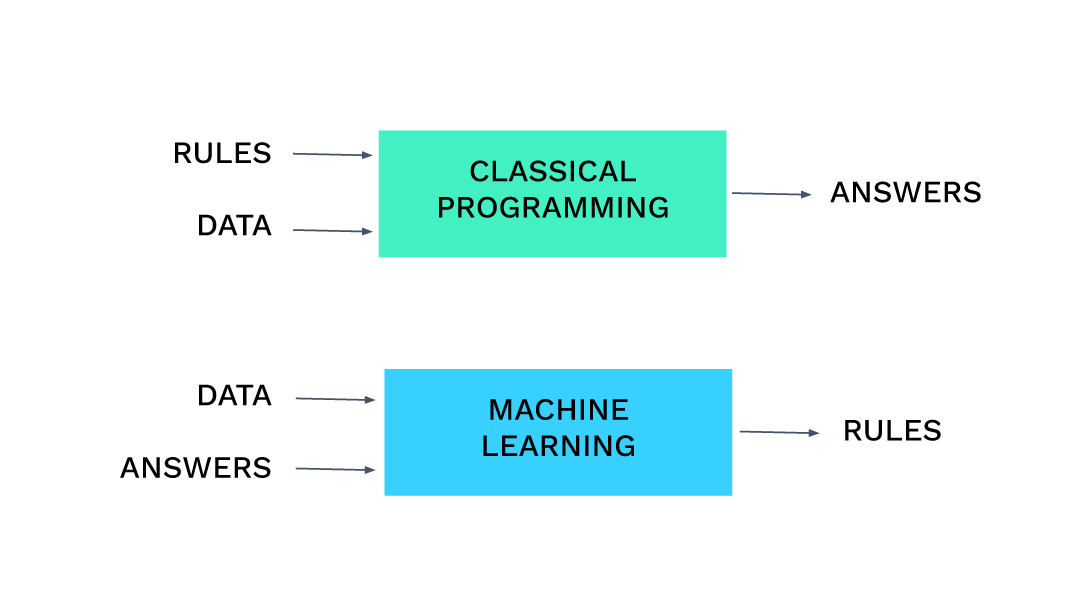

If I feed the machine learning algorithm a set of inputs and an answer:

| Input 1 | Input 2 | Answer |

|---|---|---|

| 1 | 1 | 2 |

| 3 | 2 | 5 |

| 5 | 1 | 6 |

It might learn that for all the inputs it can match the answers if it performs an addition of the inputs and so for future sets of inputs it would add them together. If we had another set of training inputs:

| Input 1 | Input 2 | Answer |

|---|---|---|

| 1 | 1 | 0 |

| 3 | 2 | 1 |

| 5 | 1 | 4 |

It might learn that the best way to get to the answers from the input data is to adjust its internal algorithm to perform a subtraction of the first and second inputs and do this for future training examples.

The really clever part is the algorithm that given a set of inputs determines what the right answer should be for a wide range of inputs and problem types. This is the machine learning algorithm and there are many different types; deep neural networks, extreme gradient boosting, k-means just to name a few.

There are two broad categories of machine learning algorithms:

Supervised machine learning

This is where you have examples of both the inputs and the answers you want given those inputs, as is the case in the addition example above. You feed these to the algorithm in a “training” phase and the algorithm adapts itself in different ways depending on what type of machine learning algorithm it is. Once the training is complete, the aim is that the machine learning algorithm will have learned to produce the right answers for future as yet unseen sets of inputs.

Unsupervised machine learning

These are machine learning algorithms that try to provide insight about data without having a defined answer to try and replicate. There are a number of theoretical uses, but in practice they are used to try and find natural groups or clusters within data. This definition can often lead to people thinking that customer segmentation and other sorts of grouping exercises are a natural fit for unsupervised machine learning, but in fact best results are most often achieved using supervised machine learning methods.

A machine learning model

Problems you can solve with machine learning

Our take is that there are 3 main applications for machine learning.

- 1 Predicting the future (it’s got a little harder in 2020)

- 2 Hyper-personalisation / segmentation to 1

- 3 Automation (Generally the highest ROI)

- Demand forecasting

- Will my customers churn

- etc.

- Customised offers

- Customised product recommendation

- etc.

- Back office process automation

- Email response automation

- etc.

Training data

Input1 - Input2 = Answer

Or

-2 + Input1 + Input2 - (Input2-1) x 2 = Answer

Either one of these algorithms would work for all the training examples listed above but the second one would give some very strange results on new data if we were expecting a simple subtraction. By having more training data examples, we help limit the scope of how wrong the model produced can be while still fitting the answers.

Test set

Depending on the problem we’re solving, it could be a case where we care about how many times the model produced answers that were exactly right, or we might care about the average error of an answer, etc. We can use different statistical measures to evaluate the performance depending on what we want to use it for. So we use this test set to score, evaluate and compare the models.

Better models translate directly to better ROI, so ensuring you have the best model, is important.

Machine learning isn’t just models

Algorithms like deep neural networks are highly configurable. They consist of a user defined number of layers of different types and different numbers of neurons on each layer among a whole host of other parameters. Different options for these values means that it can train and produce better models for different business problems. Extreme gradient boosting has a completely different set of parameters that determine how well it will be able to learn to solve a particular problem.

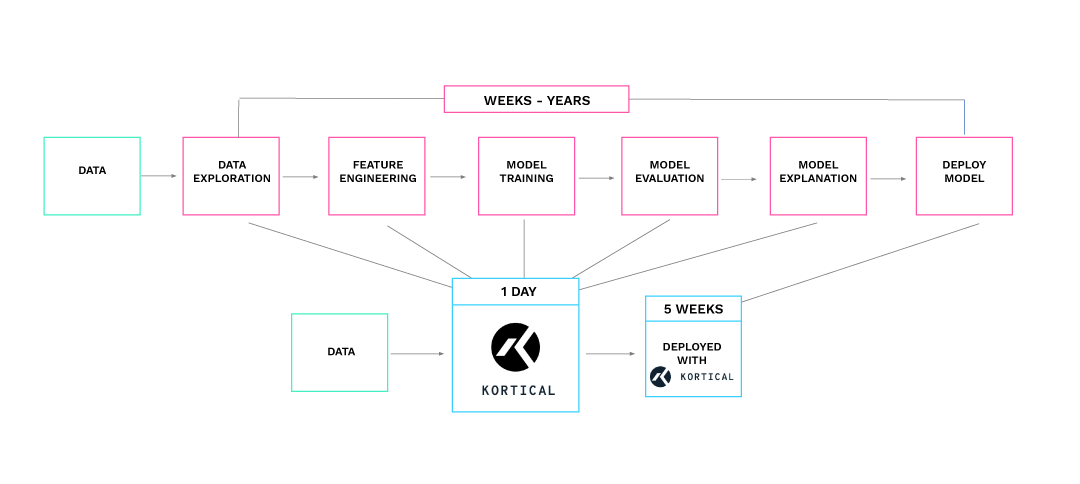

These complexities are why creating machine learning models has traditionally been the domain of data scientists who have wide ranging experience in all these techniques and what works best, but now with AutoML the barrier to entry is about as much information as is in this article.

AutoML (Automated Machine Learning)

With the right AutoML solution non-experts can get industry leading results with very little machine learning experience. Models produced in the Kortical platform typically run in the top 3% of competitive data scientists with no human intervention and with a few pointers from our team, often win outright.

AIaaS (AI as a service)

This greatly eases usage as easy to use integrations with tools such as PowerBI and Excel mean that once the models have been created they can be put to use straight away without needing to get coders involved at all.

MLOps (Machine learning operations)

The management, governance, monitoring, health management, deployment, diagnostics and business metrics of this process, through the entire model lifecycle, are often referred to as MLOps.

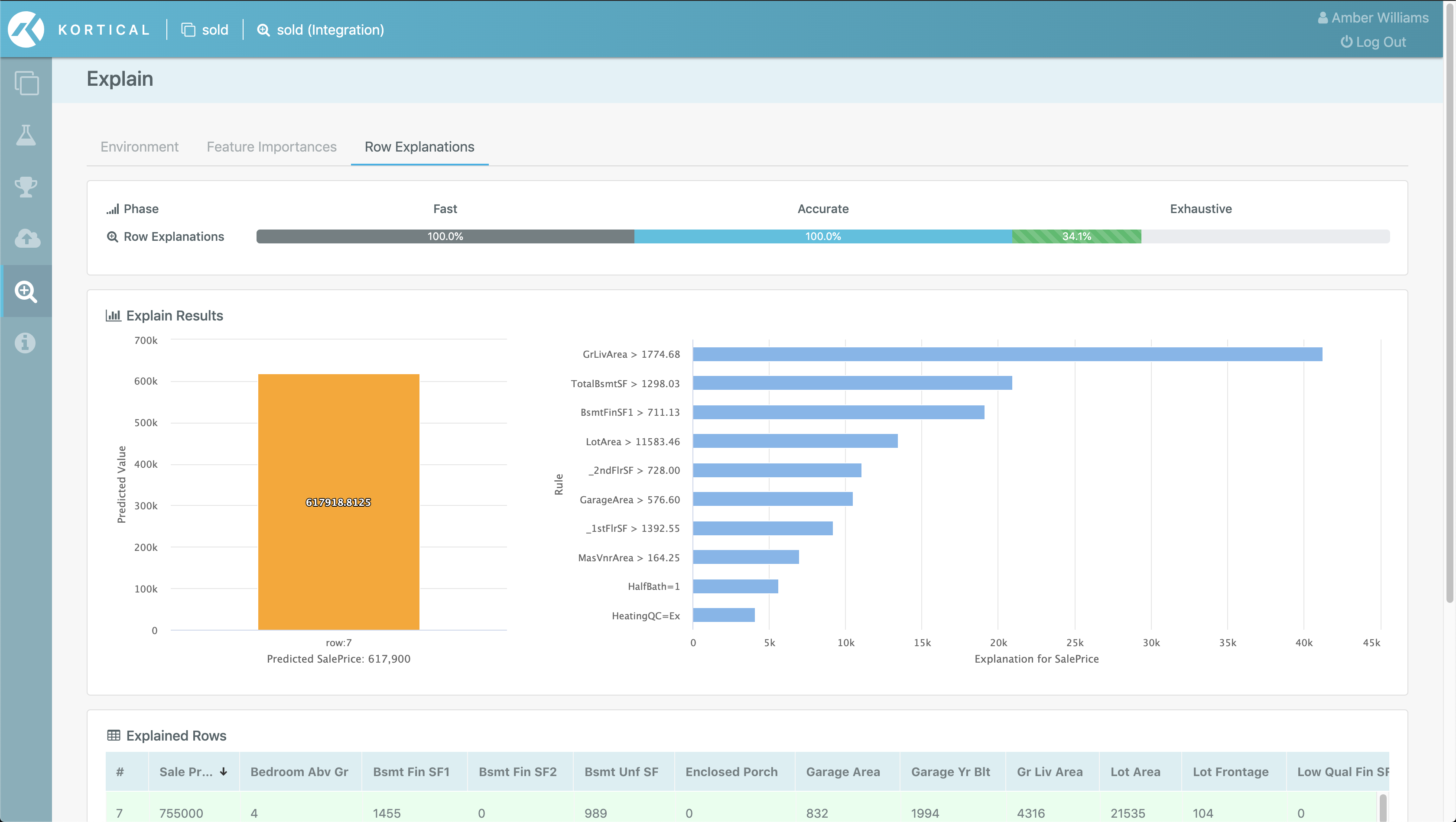

Explainable AI

- 1 Allowing us to uncover and remove unwanted bias from the data

- 2 Respond to GDPR / regulator requests about the decision making process

- 3 The often overlooked benefit is that it allows senior stakeholders to understand how the machine learning works and build enough confidence to sign off budget to put ML solutions live and delivering ROI

Kortical

You can prototype a full web hosted ML app in a day or do a full enterprise AI transformation in 6 months. This is 16x faster than industry averages.

Using tried and tested technology massively de-risks projects. Kortical projects have a 92% success rate vs 15% average for the industry.

The machine learning models and solutions produced on Kortical’s platform have convincingly won competitions against the biggest players in the market. This means that non data scientists can focus on solving the domain specific parts of the problem and let Kortical handle the machine learning.

The Kortical team have wide ranging experience getting leading results across many industries and are always there to help out.

If you are looking to deliver more ROI with ML faster, then please do get in touch below.

Get In Touch

Whether you're just starting your AI journey or looking for support in improving your existing delivery capability, please reach out.

By submitting this form, I can confirm I have read and accepted Kortical's privacy policy.