AI supply chain optimisation for platelets to reduce costs

54%LESS EXPIRES

100%LESS AD HOC TRANSPORT

06MONTH FULL DIGITAL TRANSFORMATION

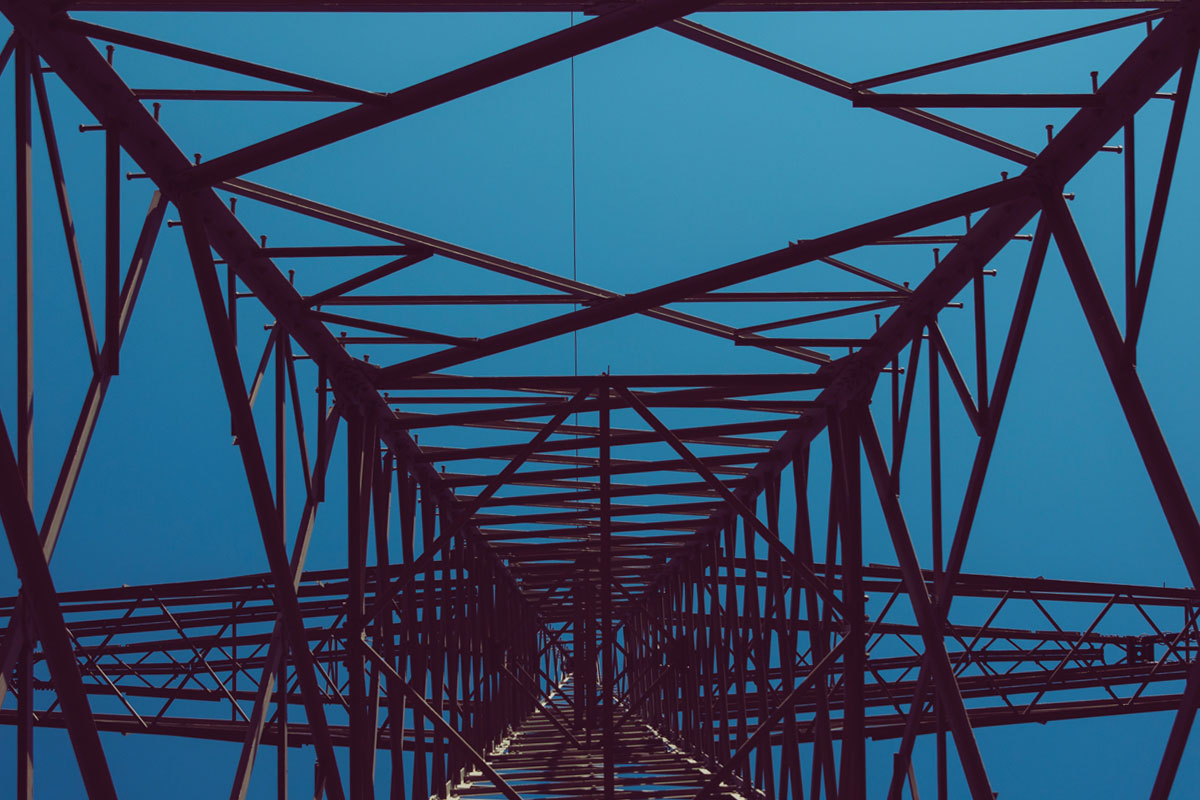

There are 22,000 mobile network towers throughout the UK. Consumer acceptance of downtime is at an all-time low and uptime is key to retaining customers and brand perception. Kortical is helping spot 52% of failures before they happen.

The project started with a workshop to determine the optimum starting point. This project was completed in 6 weeks. The current stage is a pilot, with the ambition being to integrate once success has been proven on live data in the wild.

Workshop

4 hours

Build Models

6 weeks

Pilot

6 months

Integrate

TBC

Gartner research shows AI / ML projects typically take 1 - 2 years to get to pilot, below is an outline of how the value of the use case was proved in 6 weeks using Kortical.

Kortical was introduced to MBNL through Andrew Burgess an AI strategy advisor, to build an AI solution to predict which equipment in the towers will fail with enough time for MBNL to take action. There were a wide variety of disparate datasets throughout the company that could be drawn upon from support ticket data, to manufacturer data for the equipment, to power meter data at the sites. We worked with the team to identify which datasets would be useful and predictive of which equipment might fail and even how to spot failed equipment from their data. This data ranged in formats from signal data, to text requiring NLP, to more tabular data. The Kortical platform can consume all these data feeds with a small amount of data preprocessing and then use best in class, proprietary, cloud scale AI to build over 50,000 machine learning solutions to solve this problem in the 6 weeks time frame.

We understand that the best results are a combination of human insight and powerful AI automating what can be automated, as such Kortical allows for rapid iteration getting the best of man and machine. Another key advantage is that Kortical produces production ready models, which greatly simplifies moving from proof of value to live trials.

Typically AI / ML models such as deep neural networks are called black box because you can’t see how they arrived at their decisions but Kortical can explain the decision making process of any AI / ML model and provide cues as to what datasets to join in to improve performance.

6 weeks is not a long time to come into a business area, understand the nature of the issues and validate the results to build confidence in their validity, never mind to actually build a world class machine learning solution. As such robust proof is paramount, what was done in this instance was to take the preceding 3 years data but hold back the last 6 months to use as a test set. So the models were built with the 2.5 years up to half a year ago and the performance of the models was tested against the held back 6 months of data, which was a proxy for new data that was not visible to the model when it was trained. By showing how well the model predicts incidents it hasn’t seen, confidence can be built even without a deep understanding of how the models themselves work.

Predicting over 50% of failures over a month in advance was deemed an excellent result for the proof of value and as such we’ve moved to live pilot. The purpose of the live pilot is to remove all potential doubt about the results in a cost effective manner, before the larger investment of integrating of integrating AI into the business as usual processes.

Whether you're just starting your AI journey or looking for support in improving your existing delivery capability, please reach out.

By submitting this form, I can confirm I have read and accepted Kortical's privacy policy.