Why Automated Machine Learning (AutoML)?

More accurate models lead to better business outcomes

Co-Founder, CEO & CTO

People often ask ‘Why would a business invest in AutoML?’ The answer is simple: Predicting the future better leads to better business outcomes. Better automated decision making also improves business outcomes. Tailoring experiences precisely for each customer leads to better business outcomes. All of these things work better when powered by AutoML.

Taking credit decisioning as an example, if you can predict exactly who will repay a loan and who won’t, you can offer lower rates while making more profit and therefore gain a larger share of the market.

The same thing applies in almost all machine learning applications:

- Retail: better offers means more revenue (case study +56% revenue)

In retail being able to target offers precisely can have huge implications for profitability - Automation: better models mean more automation and more savings (case study 10x productivity)

In automation, the more decisions the machine learning can confidently make, the larger percentage of human labour it automates

To illustrate just how important model accuracy can be to a business case, let’s have a look at an anonymised example taken from a real client.

This problem involved searching through transcripts of millions of maintenance logs in order to find critical repairs. There was an extremely low rate of signal to noise - we saw about 400 critical repairs for every 1 million records.

The existing process that the model would be automating involved an off-shore team of 50 engineers working over 3-5 weeks to process a single batch. Any critical repairs missed could have potentially dire consequences, so being able to prove that any machine learning solution could equal or improve on the accuracy rate of the human engineers was essential to the success of the project.

As the load on these engineers was expected to increase significantly, there was massive additional cost looming, so it was very desirable for the business to offset this if possible by automating as much of the process as possible. These engineers would much rather be spending their time on the more complex aspects of their role, but find themselves instead with the mind numbing task of finding needles in a huge haystack of maintenance logs. As well as a major cost, this also results in high turnover of staff. The automated solution would automate a huge fraction of the work to allow significantly more load to be taken on and free up these engineers to focus primarily on the most interesting parts of their roles.

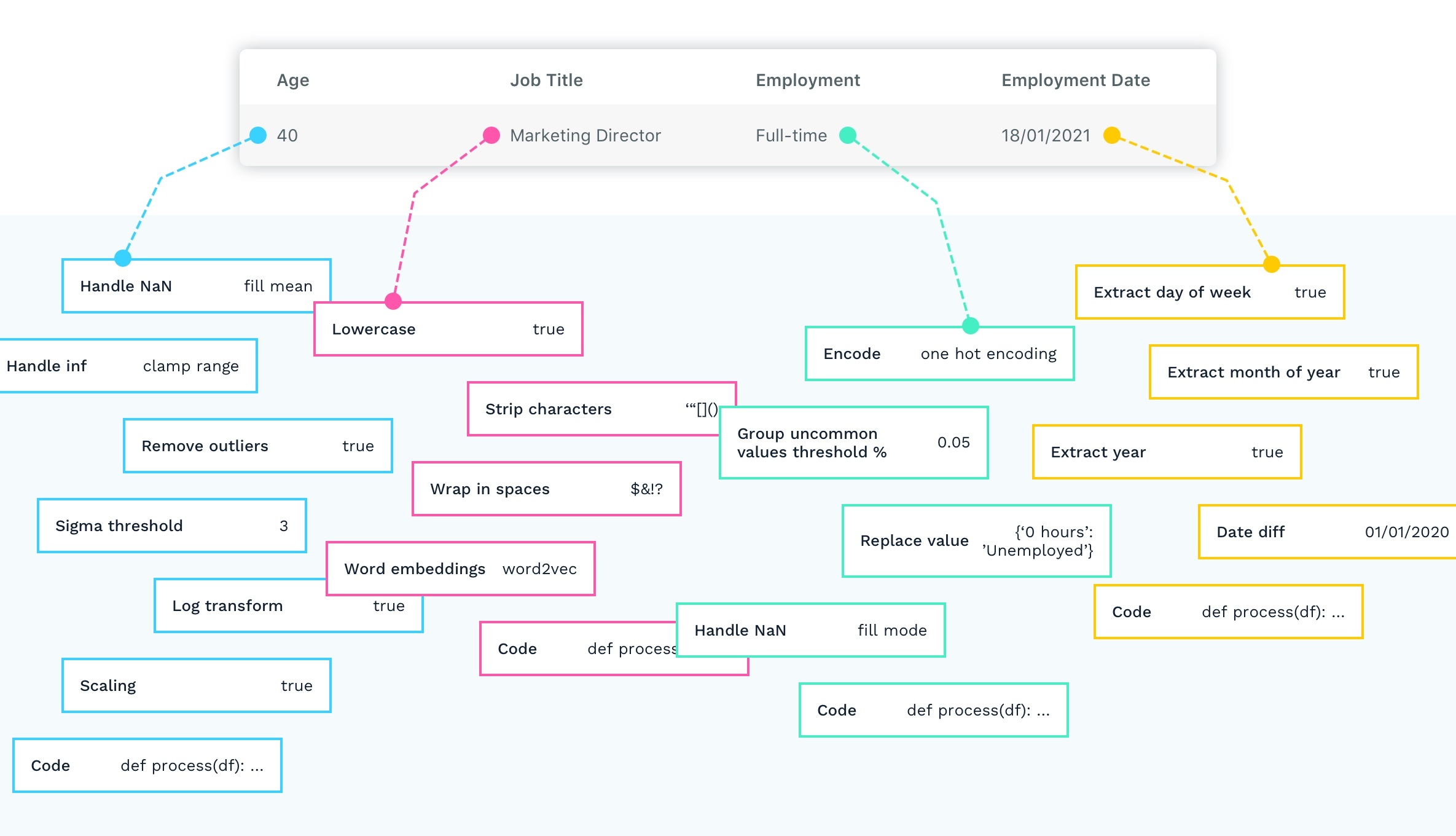

The dataset was a mix of numeric, text and categorical data. After 20 hours of training, the final solution that Kortical discovered involved combining a custom Word2Vec embedding space with an XGBoost model, with both sets of hyperparameters being tuned in parallel over many iterations (so the joint solution was optimised as a single problem).

Let’s compare these results to some standard approaches:

- Linear model (Logistic Regression) with text represented as a bag of words.

- XGBoost with text represented as TF-IDF (XGBoost is considered the swiss army knife of tabular data by many data scientists)

| Approach | Performance on Test Set | Business Case | ||

|---|---|---|---|---|

| Accuracy (AUC) | Reduction in Error vs Human | Savings | ROI | |

| Kortical | 0.993 | 26.67% | $504,500 | 561% |

| XGBoost + TF-IDF | 0.971 | -20.00% | $0* | 0% |

| Linear + Bag of Words | 0.918 | -266.67% | $0* | 0% |

*Due to the critical nature of the systems, missing repair information at a higher rate than humans is not an option

You can see that even though we are talking about less than .1 in AUC score delta between all models, in the context of this problem it means the difference between being 26% more accurate than a highly trained engineer vs over 250% worse! This would absolutely have meant the difference between this project failing or succeeding.

Needless to say, without having access to Kortical’s smart AutoML to find that exact configuration of embedding and model, we could have easily spent weeks or months manually trying to come up with an equivalent solution. In fact, Kortical can often beat 97% of competitive data science teams in online competitions like Kaggle without any human interference in a matter of hours.

This is hugely material when data scientist time is at a premium and model accuracy is crucial to a business case (as it so often is) - having a tool like Kortical makes a significant difference to project success, cost and delivery time.

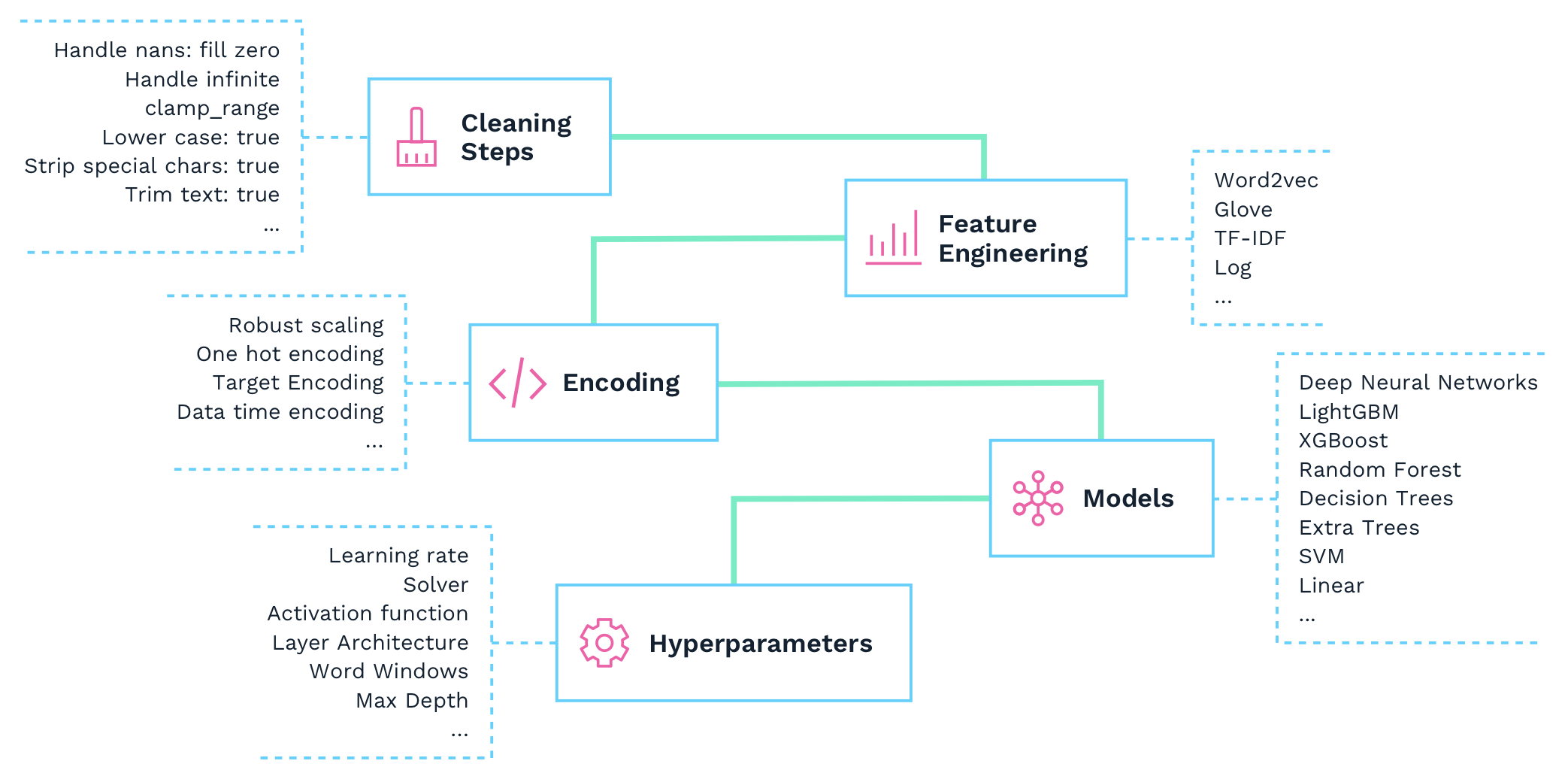

It’s not that AutoML is better than human data scientists, it’s that there are too many variables that vary wildly depending on the data for a human to possibly reason about all of them working in concert. Finding the best solution has to be somewhat experiment driven and automated machine learning can try thousands of model solutions learning from past attempts with cloud scale to send that into overdrive.

There are still elements of data science that humans do far far better than any automated solution, so the key is to make it easy for humans to take control of those aspects and give them the tools they need to leverage best in class assistive ML and cloud scale.

Better business outcomes are only a part of the reason to use AutoML platforms like Kortical. Another is speed of delivery / cost. When you talk to data scientists about why they don’t achieve the same results as AutoML, they complain that they spend the vast majority of their time doing data wrangling and deployment, and because they’re too focused on all the things to bring a solution together they spend hardly any time on modelling.

So how does Kortical help with the other aspects of a machine learning solution?

Kortical includes an automated exploratory data analysis to aid in quickly understanding the data.

Automated data pipelining to clean and transform data.

Easy customization of cleaning steps and feature engineering with instant feedback for rapid improvement.

Automated testing of various feature encoding, cleaning, models and hyperparameters. With cross validation, held back test sets, etc.

A leaderboard for experiment tracking, ranking, filtering.

Advanced model explainability for any model including Deep Neural Networks with NLP. Unlike other platforms Kortical does not use imposter models for feature importances.

Instantaneous productionisation, 1 click model deployment, model tracking, failover, rollback, UI based infrastructure adjustment, compute selection, etc.

Template solutions, the kortical API and custom app / service hosting, make Kortical the easiest way to get from notebook to production.

All these steps eat up a huge amount of time and cost in any project and cover a lot of the area where the overlap between data science and data engineering can get complicated. We think these are the main reasons why most Kortical machine learning projects take 1 month to get to the pilot stage vs the 2 years it takes on average according to a Gartner survey.

The third key aspect is project risk, again Gartner stats show that only 9% of machine learning projects are successful (with respect to being profitable). If every time you built a web app you built your own custom database and web server at the same time, I imagine very few web apps would be ROI positive! Kortical projects have a 92% success rate.

To sum it all up, if you want better business outcomes, 24x faster, cheaper and with 83% less project risk, you want AutoML. The only question is which provider.

The exact stats and features listed in this article are from Kortical which has won competitions vs other top providers. Kortical was built solving real problems in industry and as far as we are aware there is no faster way to create as high performing machine learning solutions and get them live into production. It was built by data scientists for data scientists and has layers of transparency and control unrivalled by other providers and because of this we prefer to call our brand of AutoML, AcceleratedML because it doesn’t automate away a data scientist’s ability to deliver the best solution but instead really accelerates their ability to deliver their desired solution and get the best of man and machine.

For free trial inquiries you can contact us below.

Get In Touch

Whether you're just starting your AI journey or looking for support in improving your existing delivery capability, please reach out.

By submitting this form, I can confirm I have read and accepted Kortical's privacy policy.